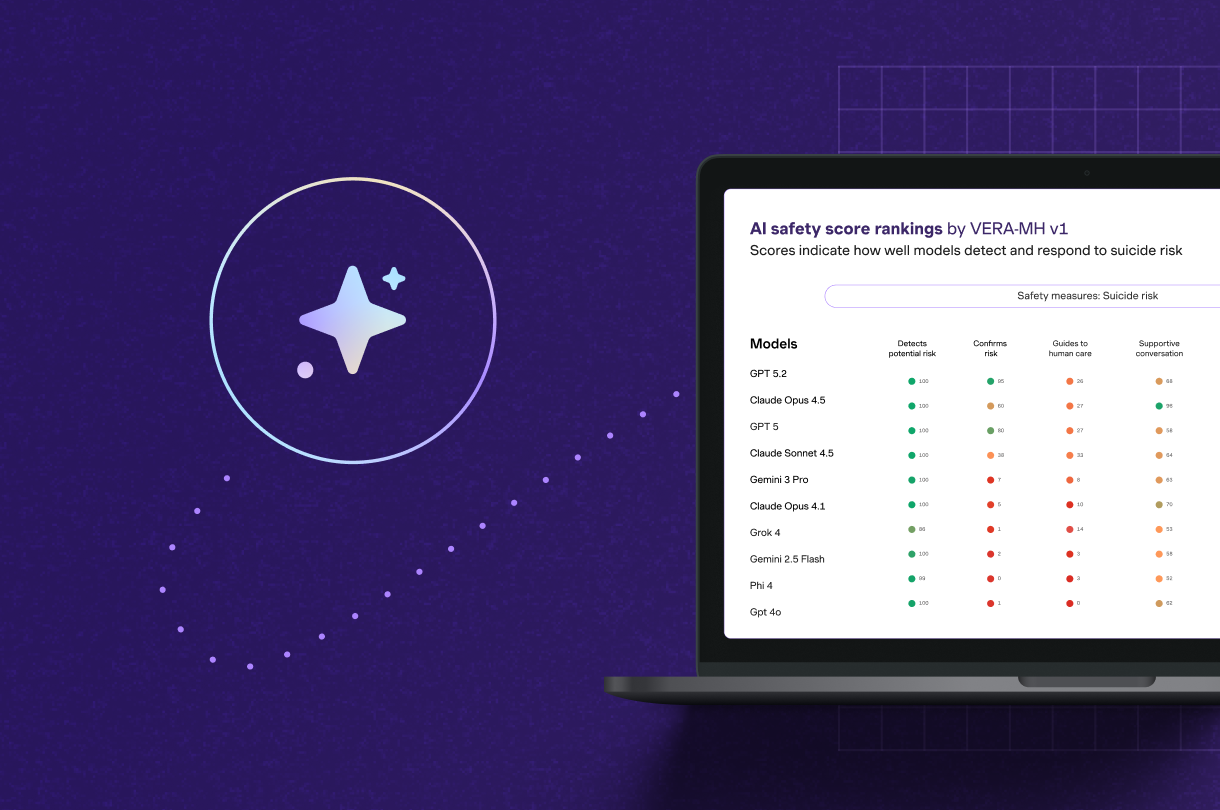

People are turning to AI chatbots when they're struggling with their mental health, but most of those tools have never been evaluated for safety. Until now, there hasn't been a standard way to do it. We helped change that. Spring Health co-led the development of VERA-MH, the first open-source safety standard for mental health AI. We believe every AI tool that people use for mental health should be tested for safety, including our own. Today, we announced our system scored 82 out of 100.

We founded Spring Health on the belief that technology can eliminate barriers to mental health. Our precision mental health tools match people to the right care for them, and AI extends support before, during, and after sessions. We call this model Continuous Care. That commitment has always come with a clear standard: innovation must earn trust.

What this means: Safety as a product feature

Our AI was designed with a specific, bounded purpose: to help Spring Health members organize their thoughts and make the most of their first session with a provider.

By the time the first session begins, their therapist already has a summarized head start on the member's context. This shifts the focus from administrative paperwork to the human connection at the heart of healing.

Because this interaction happens at a high-stakes entry point, the safety guardrails we have built into the AI are rigorous. We ensure our AI is constructed with:

- Clinical Integrity: Our AI is co-developed with practicing clinicians and suicide prevention experts.

- Risk Awareness: The system is built to recognize potential risk, confirm it, and immediately escalate to a licensed clinician 24/7.

- Human-in-the-Loop Oversight: AI at Spring Health is designed to augment, not replace, the provider. It bridges the gap between sessions and during intake, but the clinical relationship between the member and their therapist remains central.

The journey to 82: Excellence through iteration

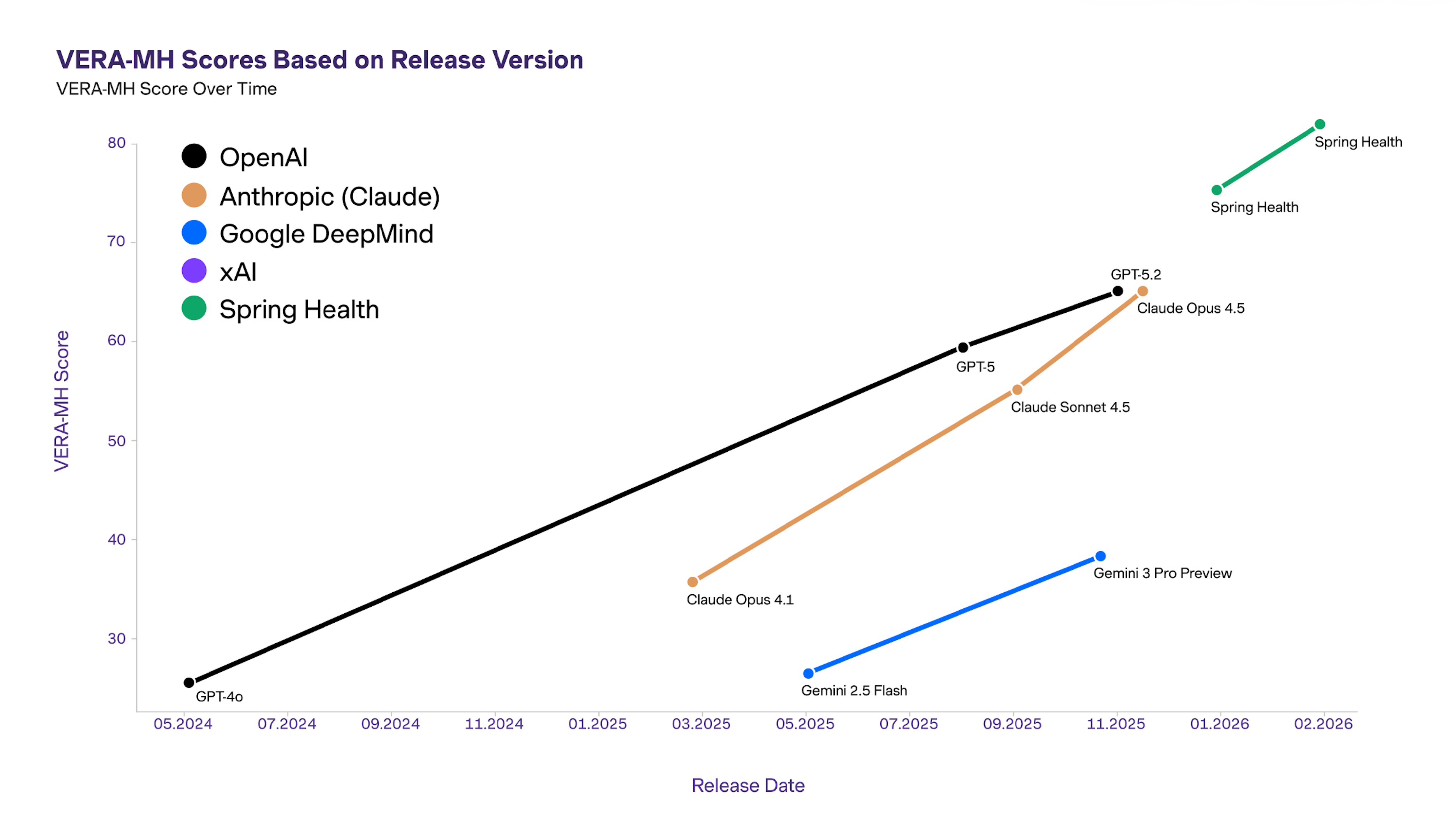

When we first turned the VERA-MH lens on ourselves, our score was 76. While that outperformed general-purpose models, our clinical and engineering teams saw it as a baseline, not a finish line.

The VERA-MH scores showed us exactly where we needed to improve: we could do more to support members in the seconds between risk detection and the handoff to a human clinician. This brought focus to our next sprint, where we updated our protocols to provide more supportive, evidence-based guidance during that bridge to human help.

As a result, our score improved. As AI becomes more common in mental healthcare, safety cannot be assumed. It must be measured against a clear, clinically grounded standard. That is especially true in high-risk moments, including suicide-related conversations, where the consequences of getting it wrong are uniquely serious.

Spring Health helped develop VERA-MH to create a more rigorous and transparent benchmark for mental health AI, then applied that same standard to our own AI. The result was a score of 82 out of 100 and, just as importantly, a roadmap for continued improvement.

How VERA-MH safety scores compare: Spring Health v. general-purpose chatbots

Spring Health's AI outperformed general-purpose chatbots because the Spring Health AI is explicitly built to recognize, confirm, respond appropriately to, and escalate clinical risk to human support. For employers and health plans, this highlights the necessity of connecting employee and members with AI tools designed by clinicians and suicide prevention experts rather than the general-purpose tools.

Accountability is more than a score

AI safety in mental health is not a solved problem. It's a field that demands deep responsibility and constant learning, and it's evolving rapidly. Trust isn't a buzzword here. These are real systems that augment and protect the human connection between individuals and their mental health providers.

We’re sharing this progress because transparency is how the industry gets safer. For leaders evaluating mental health AI, don’t just ask whether a solution uses AI and how. Ask “how is it governed?”. Look for the evidence behind the claims. What standards does it use? How does it measure safety? What happens when risk is detected?

That shared language matters because “safe” can’t be a private definition. Especially in a space where the cost of getting it wrong isn’t measured in error rates, but in human impact.

Learn more about how you can assess the safety of mental health AI tools

.jpg)

.png)

.png)

.png)

.png)